How artificial intelligence can help achieve a clean energy future

There is growing attention on the links between artificial intelligence and increased energy demands. But while the power-hungry data centers being built to support AI could potentially stress electricity grids, increase customer prices and service interruptions, and generally slow the transition to clean energy, the use of artificial intelligence can also help the energy transition.

For example, use of AI is reducing energy consumption and associated emissions in buildings, transportation, and industrial processes. In addition, AI is helping to optimize the design and siting of new wind and solar installations and energy storage facilities.

On electric power grids, using AI algorithms to control operations is helping to increase efficiency and reduce costs, integrate the growing share of renewables, and even predict when key equipment needs servicing to prevent failure and possible blackouts. AI can help grid planners schedule investments in generation, energy storage, and other infrastructure that will be needed in the future. AI is also helping researchers discover or design novel materials for nuclear reactors, batteries, and electrolyzers.

Researchers at MIT and elsewhere are actively investigating aspects of those and other opportunities for AI to support the clean energy transition. At its 2025 research conference, MITEI announced the Data Center Power Forum, a targeted research effort for MITEI member companies interested in addressing the challenges of data center power demand.

Controlling real-time operations

Customers generally rely on receiving a continuous supply of electricity, and grid operators get help from AI to make that happen — while optimizing the storage and distribution of energy from renewable sources at the same time.

But with more installation of solar and wind farms — both of which provide power in smaller amounts, and intermittently — and the growing threat of weather events and cyberattacks, ensuring reliability is getting more complicated. “That’s exactly where AI can come into the picture,” explains Anuradha Annaswamy, a senior research scientist in MIT’s Department of Mechanical Engineering and director of MIT’s Active-Adaptive Control Laboratory. “Essentially, you need to introduce a whole information infrastructure to supplement and complement the physical infrastructure.”

The electricity grid is a complex system that requires meticulous control on time scales ranging from decades all the way down to microseconds. The challenge can be traced to the basic laws of power physics: electricity supply must equal electricity demand at every instant, or generation can be interrupted. In past decades, grid operators generally assumed that generation was fixed — they could count on how much electricity each large power plant would produce — while demand varied over time in a fairly predictable way. As a result, operators could commission specific power plants to run as needed to meet demand the next day. If some outages occurred, specially designated units would start up as needed to make up the shortfall.

Today and in the future, that matching of supply and demand must still happen, even as the number of small, intermittent sources of generation grows and weather disturbances and other threats to the grid increase. AI algorithms provide a means of achieving the complex management of information needed to forecast within just a few hours which plants should run while also ensuring that the frequency, voltage, and other characteristics of the incoming power are as required for the grid to operate properly.

Moreover, AI can make possible new ways of increasing supply or decreasing demand at times when supplies on the grid run short. As Annaswamy points out, the battery in your electric vehicle (EV), as well as the one charged up by solar panels or wind turbines, can — when needed — serve as a source of extra power to be fed into the grid. And given real-time price signals, EV owners can choose to shift charging from a time when demand is peaking and prices are high to a time when demand and therefore prices are both lower. In addition, new smart thermostats can be set to allow the indoor temperature to drop or rise — a range defined by the customer — when demand on the grid is peaking. And data centers themselves can be a source of demand flexibility: selected AI calculations could be delayed as needed to smooth out peaks in demand. Thus, AI can provide many opportunities to fine-tune both supply and demand as needed.

In addition, AI makes possible “predictive maintenance.” Any downtime is costly for the company and threatens shortages for the customers served. AI algorithms can collect key performance data during normal operation and, when readings veer off from that normal, the system can alert operators that something might be going wrong, giving them a chance to intervene. That capability prevents equipment failures, reduces the need for routine inspections, increases worker productivity, and extends the lifetime of key equipment.

Annaswamy stresses that “figuring out how to architect this new power grid with these AI components will require many different experts to come together.” She notes that electrical engineers, computer scientists, and energy economists “will have to rub shoulders with enlightened regulators and policymakers to make sure that this is not just an academic exercise, but will actually get implemented. All the different stakeholders have to learn from each other. And you need guarantees that nothing is going to fail. You can’t have blackouts.”

Using AI to help plan investments in infrastructure for the future

Grid companies constantly need to plan for expanding generation, transmission, storage, and more, and getting all the necessary infrastructure built and operating may take many years, in some cases more than a decade. So, they need to predict what infrastructure they’ll need to ensure reliability in the future. “It’s complicated because you have to forecast over a decade ahead of time what to build and where to build it,” says Deepjyoti Deka, a research scientist in MITEI.

One challenge with anticipating what will be needed is predicting how the future system will operate. “That’s becoming increasingly difficult,” says Deka, because more renewables are coming online and displacing traditional generators. In the past, operators could rely on “spinning reserves,” that is, generating capacity that’s not currently in use but could come online in a matter of minutes to meet any shortfall on the system. The presence of so many intermittent generators — wind and solar — means there’s now less stability and inertia built into the grid. Adding to the complication is that those intermittent generators can be built by various vendors, and grid planners may not have access to the physics-based equations that govern the operation of each piece of equipment at sufficiently fine time scales. “So, you probably don’t know exactly how it’s going to run,” says Deka.

And then there’s the weather. Determining the reliability of a proposed future energy system requires knowing what it’ll be up against in terms of weather. The future grid has to be reliable not only in everyday weather, but also during low-probability but high-risk events such as hurricanes, floods, and wildfires, all of which are becoming more and more frequent, notes Deka. AI can help by predicting such events and even tracking changes in weather patterns due to climate change.

Deka points out another, less-obvious benefit of the speed of AI analysis. Any infrastructure development plan must be reviewed and approved, often by several regulatory and other bodies. Traditionally, an applicant would develop a plan, analyze its impacts, and submit the plan to one set of reviewers. After making any requested changes and repeating the analysis, the applicant would resubmit a revised version to the reviewers to see if the new version was acceptable. AI tools can speed up the required analysis so the process moves along more quickly. Planners can even reduce the number of times a proposal is rejected by using large language models to search regulatory publications and summarize what’s important for a proposed infrastructure installation.

Harnessing AI to discover and exploit advanced materials needed for the energy transition

“Use of AI for materials development is booming right now,” says Ju Li, MIT’s Carl Richard Soderberg Professor of Power Engineering. He notes two main directions.

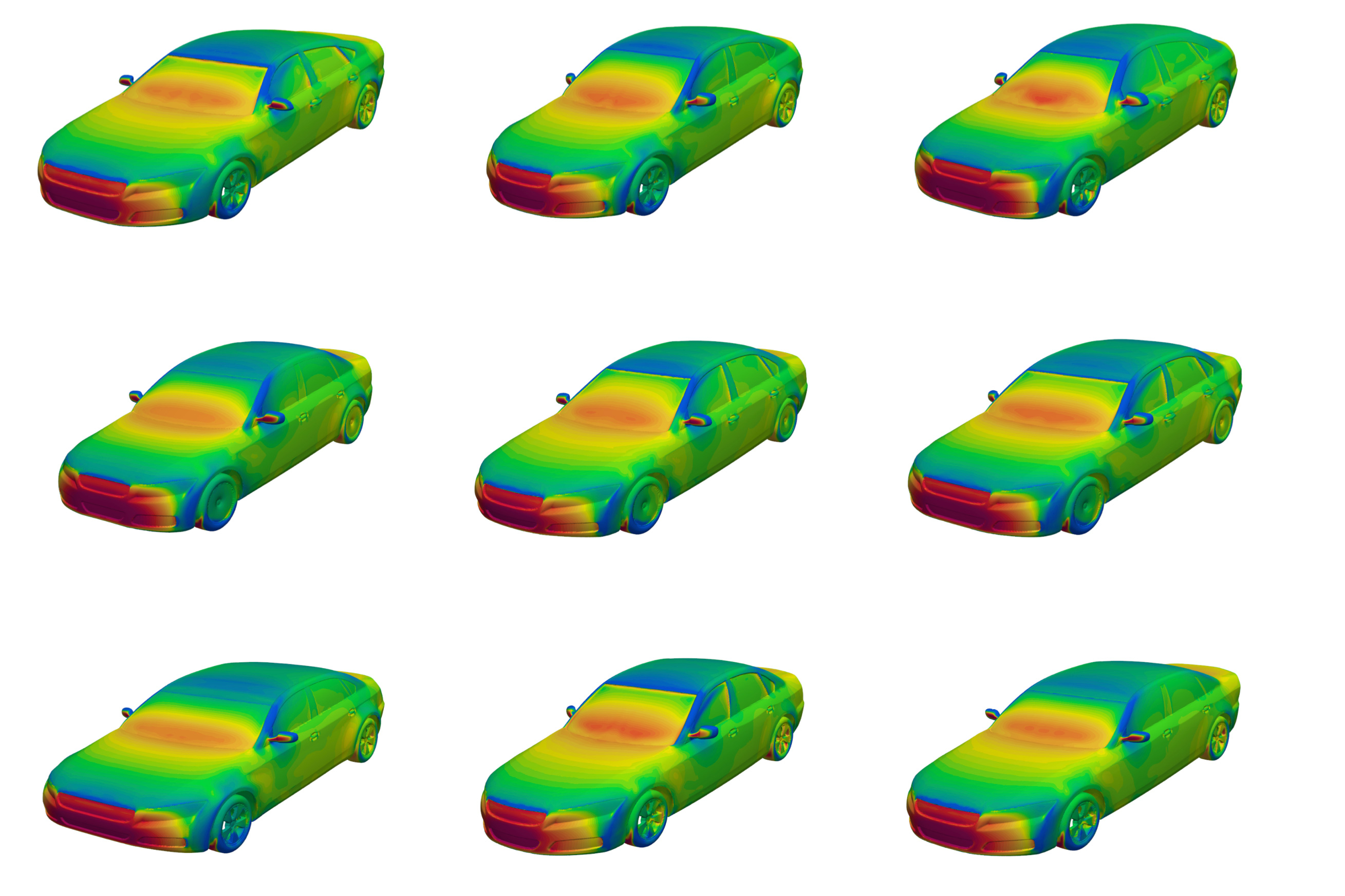

First, AI makes possible faster physics-based simulations at the atomic scale. The result is a better atomic-level understanding of how composition, processing, structure, and chemical reactivity relate to the performance of materials. That understanding provides design rules to help guide the development and discovery of novel materials for energy generation, storage, and conversion needed for a sustainable future energy system.

And second, AI can help guide experiments in real time as they take place in the lab. Li explains: “AI assists us in choosing the best experiment to do based on our previous experiments and — based on literature searches — makes hypotheses and suggests new experiments.”

He describes what happens in his own lab. Human scientists interact with a large language model, which then makes suggestions about what specific experiments to do next. The human researcher accepts or modifies the suggestion, and a robotic arm responds by setting up and performing the next step in the experimental sequence, synthesizing the material, testing the performance, and taking images of samples when appropriate. Based on a mix of literature knowledge, human intuition, and previous experimental results, AI thus coordinates active learning that balances the goals of reducing uncertainty with improving performance. And, as Li points out, “AI has read many more books and papers than any human can, and is thus naturally more interdisciplinary.”

The outcome, says Li, is both better design of experiments and speeding up the “work flow.” Traditionally, the process of developing new materials has required synthesizing the precursors, making the material, testing its performance and characterizing the structure, making adjustments, and repeating the same series of steps. AI guidance speeds up that process, “helping us to design critical, cheap experiments that can give us the maximum amount of information feedback,” says Li.

“Having this capability certainly will accelerate material discovery, and this may be the thing that can really help us in the clean energy transition,” he concludes. “AI [has the potential to] lubricate the material-discovery and optimization process, perhaps shortening it from decades, as in the past, to just a few years.”

MITEI’s contributions

At MIT, researchers are working on various aspects of the opportunities described above. In projects supported by MITEI, teams are using AI to better model and predict disruptions in plasma flows inside fusion reactors — a necessity in achieving practical fusion power generation. Other MITEI-supported teams are using AI-powered tools to interpret regulations, climate data, and infrastructure maps in order to achieve faster, more adaptive electric grid planning. AI-guided development of advanced materials continues, with one MITEI project using AI to optimize solar cells and thermoelectric materials.

Other MITEI researchers are developing robots that can learn maintenance tasks based on human feedback, including physical intervention and verbal instructions. The goal is to reduce costs, improve safety, and accelerate the deployment of the renewable energy infrastructure. And MITEI-funded work continues on ways to reduce the energy demand of data centers, from designing more efficient computer chips and computing algorithms to rethinking the architectural design of the buildings, for example, to increase airflow so as to reduce the need for air conditioning.

In addition to providing leadership and funding for many research projects, MITEI acts as a convenor, bringing together interested parties to consider common problems and potential solutions. In May 2025, MITEI’s annual spring symposium — titled “AI and energy: Peril and promise” — brought together AI and energy experts from across academia, industry, government, and nonprofit organizations to explore AI as both a problem and a potential solution for the clean energy transition. At the close of the symposium, William H. Green, director of MITEI and Hoyt C. Hottel Professor in the MIT Department of Chemical Engineering, noted, “The challenge of meeting data center energy demand and of unlocking the potential benefits of AI to the energy transition is now a research priority for MITEI.”

© Image: Igor Borisenko/iStock